1 Introduction

Practically every process in the living cell requires molecular recognition and formation of complexes, which may be stable or transient assemblies of two or more molecules with one molecule acting on the other, or promoting intra- and intercellular communication, or permanent oligomeric ensembles. The development of proteomics methods, such as two-hybrid assays, which provide ample information about protein–protein interactions in vivo, has modified our view of the living cell, emphasizing the importance of signaling cascades and networks of interactions. The rapid accumulation of data on protein–protein interactions, sequences, and structures calls for the development of computational methods to process and combine the information. Particularly important are the methods designed to predict structures of molecular complexes and ensembles that cannot be studied by current experimental methods; transient complexes, for example, are often too short-lived for crystallization or NMR spectroscopy. In some cases a theoretical approach is the only available tool, as for example in studies of antibody recognition of the surfaces of crystals of small organic molecules [1,2].

In docking methods, an attempt is made to predict the structures of complexes given the structures of the component molecules. In the most general case, docking procedures identify the binding sites and predict the relative geometries of the interacting molecules and their conformations in the complex. Over the past 30 years many docking approaches have been proposed (see recent reviews by [3–6]). Most of the methods fall into two categories. One uses direct thermodynamic approaches in which the free energy of the complex, described through different approximations of the enthalpy and entropy terms, is minimized (e.g., [7,8]). The other category includes empirical methods that exploit phenomenological data, such as the geometric and chemical complementarity observed in protein–protein complexes. Although at first glance they appear to be completely different, both approaches are based on the thermodynamics of intermolecular interactions, either directly through enthalpy and entropy equations, or indirectly by considering observed manifestations of the thermodynamics of molecular recognition. For example, shape complementarity reflects the extent of van der Waals interactions for a given interface. More importantly, it also provides an estimate of the number of water molecules that are released to the bulk upon complex formation (desolvation), hence of the entropy change. The latter is the driving force for complex formation in aqueous solution at room temperature [9], and therefore geometric complementarity provides a strong measure of the stability of a complex.

Different empirical docking approaches have been described, each one employing a combination of methods for representing the molecules, searching the solution space, and evaluating the quality of the different complexes. For example, approaches that use correlation for searching the solution space and assessing their quality were combined with different grid representations of the molecules (see below), a genetic algorithm was successfully combined with a molecular surface dot representation [10] or with an atomic representation [11], and computer vision techniques for matching the molecules were found to combine well with knob and hole representations [12].

In this review we focus on protein–protein docking procedures that employ various grid representations of the molecules, and use correlations for searching the solution space and evaluating the putative complexes. Notably, these algorithms treat the molecules as rigid bodies, reducing the docking problem to a six-dimensional search through the rotation–translation space. Thus, conformations of the docked molecules are not changed, although some geometric mismatching is tolerated (see below).

2 First steps in protein–protein docking using grid representations

The use of three-dimensional (3D) grids to represent molecules was introduced into molecular docking independently by Jiang and Kim [13] and Katchalski-Katzir et al. [14] at approximately the same time. Although similar, the two docking algorithms differ in several details. Jiang and Kim [13] combine two representations of the molecule: surface dots with attached surface normals as proposed by Connolly [15], and volume (interior) and surface cubes, the latter containing 2–3 surface dots each. The match between the molecular surfaces at each relative position is evaluated by the number of matching dots, requiring that the cubes containing them overlap and that their attached normals point in approximately opposite directions. The approach of Katchalski-Katzir et al. [14] is simpler in two ways. First, only one representation is employed; the molecules are digitized onto 3D grids, and the surface and the interior of the molecule are distinguished from each other by a digital process that does not require calculation of surface dots. Secondly, for each orientation the correlation function is calculated via Fast Fourier Transformations (FFT), thereby implicitly searching through all the relative translations. The grid (or the equivalent cube) representation effectively softens the surfaces of the molecules, allowing some interpenetration.

The simple and straightforward combination of grid representations with rapid matching of the molecular surfaces by calculation of a correlation function via FFT [14] appealed to a wide readership, and many research groups adopted and modified this approach.

3 The geometric FFT docking algorithm

The 3D structures of protein complexes reveal a close geometric and chemical match between those parts of the molecular surfaces that are in contact. Hence, the shape and other physical characteristics of the surfaces largely determine the nature of the specific interaction. Furthermore, in many cases the 3D structures of the components of the complex closely resemble those of the molecules in their uncomplexed state [16]. Geometric matching is therefore likely to play an important part in determining the structure of the complex.

On the basis of the considerations outlined above, Katchalski-Katzir et al. developed their docking algorithm, which initially assessed only geometric surface complementarity [14]. We describe this algorithm (later named MolFit) in some detail, because many subsequent modifications were derived from it.

The first step in MolFit is the production of grid representations of the two protein molecules a and b, derived from their atomic coordinates, as follows:

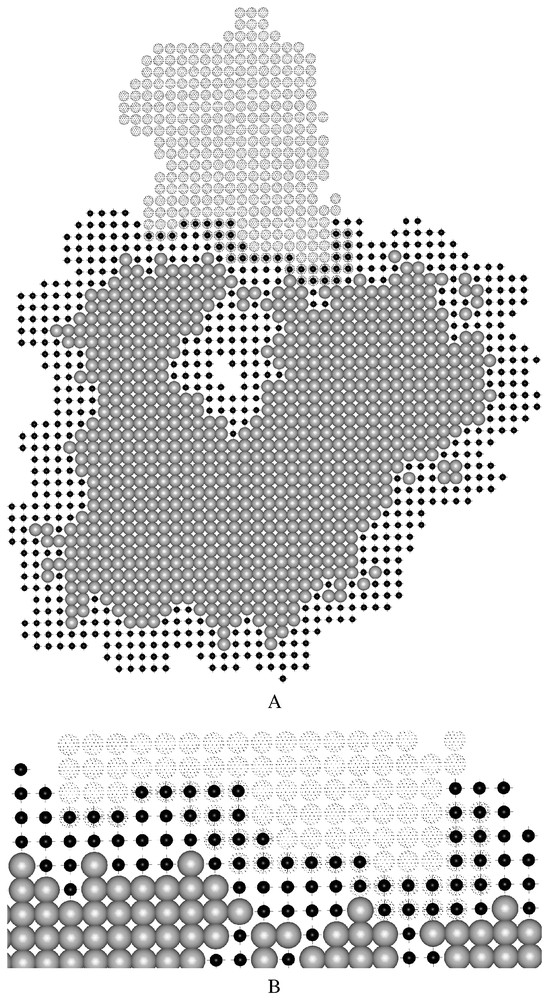

Two-dimensional cross-sections of the grid representations employed by MolFit for molecules a and b. The light gray spheres and the small black spheres denote grid points in the interior and in the surface layer of molecule a, respectively. The large dotted spheres denote grid points of molecule b, for which no distinction is made between surface and interior [14]. Molecules a and b are positioned as in the complex, therefore some of the surface grid points of molecule a overlap grid points of molecule b. Such overlaps make positive contributions to the geometric complementarity score. The interface portion of panel A is enlarged in panel B. Masquer

Two-dimensional cross-sections of the grid representations employed by MolFit for molecules a and b. The light gray spheres and the small black spheres denote grid points in the interior and in the surface layer of molecule a, respectively. The large dotted ... Lire la suite

Matching of the surfaces is accomplished by calculating the correlation function between the discrete functions

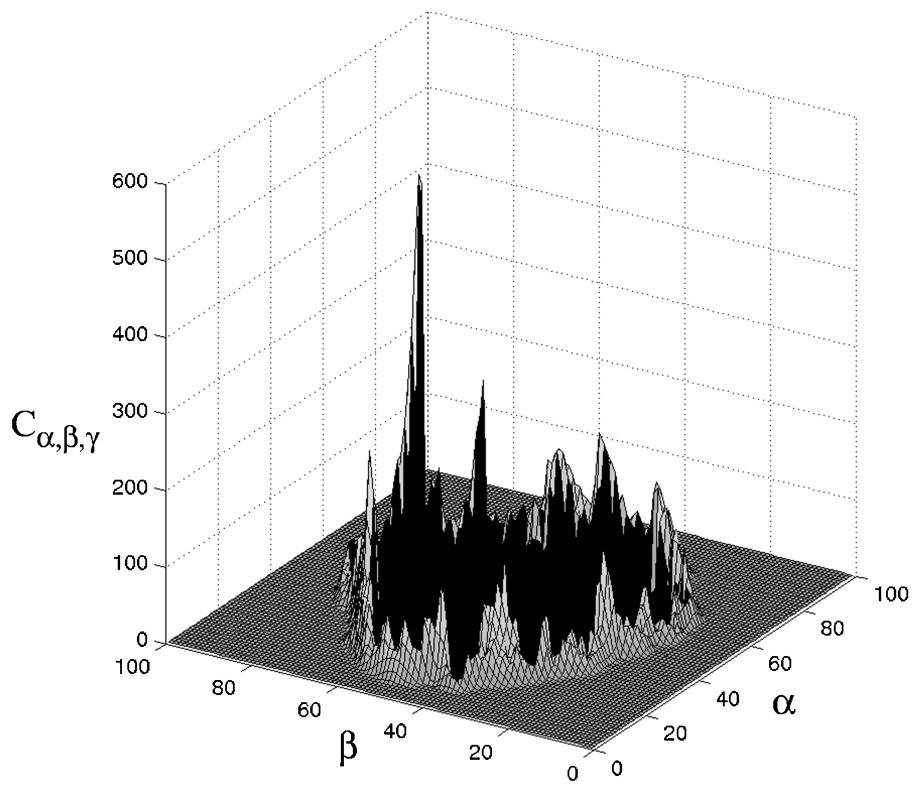

A cross-section of a typical correlation function for a good match is presented in Fig. 2. The coordinates of the prominent peak denote the relative shift of molecule b that yields a good match with molecule a. The width of the peak provides a measure of the relative displacement allowed before matching is lost.

Cross-section of a typical correlation matrix Cα,β,γ. The negative values, which represent positions with sever interpenetration, are denoted by the black area. Note the prominent peak that represents a good match. The coordinates of the peak denote the relative shift of molecule b that yields a good match with molecule a.

Direct calculation of the correlation between the two functions is a rather lengthy procedure, requiring N3 multiplications and additions for each of the N3 possible combinations of α, β, and γ, and resulting in the order of N6 computing steps. In contrast, FFT requires the order of N3·ln(N3) steps for transforming a 3D function of N×N×N values.

To complete the general search for a match between the surfaces of molecules a and b, the correlation function

Geometric docking with the MolFit algorithm, as described above, yielded excellent results for the bound docking (defined below) of four protein–protein complexes and one protein–small ligand system, identifying a ‘nearly correct’ solution (defined below) in each case and ranking it very highly (rank 1–5 before refinement and 1 after refinement).

4 Bound versus unbound docking and score versus rank

It is important to clarify the terms often used in determining the success or failure of a docking search. It is common to distinguish between bound docking, i.e. searches that employ the structures of the molecules as they appear in the complex, and unbound docking, in which the structures of one or both molecules are determined separately. In both cases, the accuracy of the predictions is limited by the rigid-body approximation and the discrete translation and rotation grids. We therefore expect that our solutions will be only ‘nearly correct’. The definition of a nearly correct solution differs in different studies that calculate the root mean square difference (RMSD) between (i) Cα atoms of interface residues, (ii) Cα atoms of the whole complex, or (iii) Cα atoms of the ligand molecule (the smaller molecule in the complex; molecule b in the description above) after superposition of the receptor molecule (the larger molecule in the complex; molecule a in the description above). Common criteria are up to 2–2.5 Å RMSD for interface residues, 3–4 Å RMSD for the whole complex, and 7–10 Å RMSD for the ligand molecule.

It is important to ensure that the algorithm not only gives a high score to the nearly correct solution, but that it also distinguishes it from other, false solutions. Therefore, another parameter that reflects the success of a docking search is the rank of the nearly correct solution. This rank, which is the position of the nearly correct solution in the list of solutions sorted by their scores, should be a small number (1 in the optimal case).

5 The first era of FFT docking (bound docking)

The first era of FFT docking was a series of attempts to improve the method of Katchalski-Katzir et al. and make it faster. Vakser and Aflalo [17] used larger grid intervals and considered only complementarity of the hydrophobic portions of the molecular surface by treating the hydrophilic parts as ‘outside the molecule’. They tested the method on four protein–protein complexes and concluded that it yielded better signal-to-noise ratios than geometric docking. Except for the large grid interval, their approach was very similar to the geometric docking of Katchalski-Katzir et al., because they assigned about 80% of the surface as hydrophobic. Meyer et al. [18] replaced the comprehensive search of the rotation–translation space by a partial search, which they limited to conformations capable of forming at least two hydrogen bonds at the interface. They applied the method to 45 complexes using the bound geometries of the molecules, and obtained high-ranking nearly correct predictions (ranking 1–3) in every case. Another procedure that limited the number of relative orientations searched was proposed by Ackermann et al. [19]. Using the FFT procedure, they matched only pre-selected pairs of surface segments. Their scores comprised a geometric complementarity term, a hydrophobic term, and an electrostatic term. Hydrophobicity values and charges were stored in separate sets of grids. The authors applied the method to 51 homo- and heterodimers, employing the bound structures. Despite the elaborate complementarity function, a nearly correct solution (ranking 1–15) with RMSD <3 Å for all Cα atoms was identified for only 18 of the 51 systems. The authors attributed their limited success to the sampling method, and reported that global sampling of the rotation–translation space, using only geometric docking, identified nearly correct solutions ranking 1 for all 51 systems [19].

Several conclusions can be drawn from the results of the abovementioned bound docking studies. First, Katchalski-Katzir et al. and Ackermann et al. obtained very good predictions using only geometric docking. Hence, geometric complementarity appears to be the most dominant term in the evaluation of different docking solutions. Secondly, an exhaustive rotation–translation scan appears to yield better results than partial scans. Thirdly, in all of these studies the docking procedures and parameters were optimized so as to improve the reproduction of known complexes. In unbound docking, however, other parameters might be more appropriate. For example, disassembled complexes were reproduced very well by the procedure of Meyer et al. [18], but the parameters, especially the strict measurements of hydrogen bond geometry, would probably be too limiting in unbound docking.

6 The first docking challenge

Docking programs were first put to the test in the prediction challenge proposed by Strynadka et al. [20]. This was the first blind prediction test, in which the predicting groups submitted their models before the experimental structure of the complex was made available. In such a challenge all participating groups study the same targets, thus eliminating two important factors that make it difficult to compare the performance of different algorithms: choice of targets, which may be harder or easier for prediction, and bias, which is naturally introduced when the predictor knows the expected results.

Six groups participated in the first docking challenge, in which they were required to predict the structure of the complex between TEM1 β-lactamase and the β-lactamase inhibitory protein (BLIP). All six submitted a nearly correct solution, ranked 1, with RMSD values ranging from 1.1 Å (the solution by Eisenstein and Katchalski-Katzir) to 2.5 Å. Very different approaches were used by the participants [20], including energy calculations and estimates of shape complementarity. Janin [21] analyzed the results in terms of the gap between the top ranking (and nearly correct) solution and the next solution. He observed that algorithms that rely on geometric complementarity produced larger gaps than algorithms employing elaborate energy functions. In particular, the electrostatic term was a poor selection criterion. Moreover, algorithms that allowed for conformation changes were not necessarily more successful than the rigid-body docking algorithms.

7 Unbound protein–protein docking

In predicting the structure of the complex between TEM1 β-lactamase and BLIP, the unbound molecular structures were docked. This started a new phase in protein–protein docking, in which the emphasis was on unbound docking. Unbound docking was initially attempted by the two groups that introduced grid representations of molecules into docking [13,14]. Both groups found that their docking algorithms were less successful when unbound structures were used. The inevitable conclusion was that geometric docking fails because structural changes occur upon complex formation. As a next step, additional energy terms (such as electrostatic interactions) were considered in the evaluation of the docking solutions. This was done within the rotation–translation scan or in the context of post-scan re-evaluation filters.

7.1 Electrostatic complementarity

Several attempts were made to introduce electrostatics as an additional term in grid-based docking. Gabb et al. [22] added a test for electrostatic complementarity to the geometric docking method of Katchalski-Katzir et al. The electrostatic descriptor of the stationary molecule was its electrostatic potential, whereas for the moving molecule partial atomic charges were used. The electrostatic descriptors were represented on separate grids and correlated using FFT, producing a Coulombic electrostatic energy term (the product of potential and charge). The electrostatic energy was not added to the geometric complementarity, but was used as a yes/no filter, eliminating docking solutions that were electrostatically unfavorable.

The concept of depicting the electrostatic potential of one molecule and partial atomic charges on the other molecule in additional grids that were distinct from the geometric grids was also employed in the algorithm of Mandell et al. [23]. In that study, however, the potential was described by solvent continuum electrostatics, which captured the effect of the different dielectric constants of the protein and the aqueous solution. In addition, Mandell et al. treated electrostatics as an additional complementarity term, which was combined with the geometric term to produce a composite energy function. Their treatment of geometric complementarity differed from that of Katchalski-Katzir et al., in that they counted the number of intermolecular collisions instead of imposing a grid-based penalty for collisions.

Electrostatic energy was used by Palma et al. as a post-scan filter [24]. These authors calculated the Coulombic energy for the top-ranking solutions from the full scan, but added a dampening constant to the inter-atomic distances to circumvent unrealistic electrostatic repulsion or attraction arising from any small interpenetrations of the docked molecules.

A somewhat different approach was proposed by Heifetz et al. [25]. Instead of calculating the electrostatic energy, which is highly sensitive to structural details and hence to conformation changes, they chose to correlate the electrostatic potentials of the molecules, which reflect their tendency to form good or bad electrostatic interactions. This was based on the previously observed pronounced anti-correlation of the electrostatic potentials at the interface [26]. Heifetz et al. used a single grid of complex numbers to describe each molecule, storing information about the shape of the molecule in the real part and information about its electrostatic character in the imaginary part. Thus,

All of the abovementioned docking procedures have been applied to unbound systems. Some of the studies presented comparisons of geometric and geometric–electrostatic docking results [22,23,25] showing that, in general, inclusion of electrostatic complementarity improved the results of geometric docking. Nevertheless, even in unbound docking, the geometric complementarity term appeared more dominant than the electrostatic term. Heifetz et al. formulated several rules for ‘good electrostatic docking’, which highlighted cases in which inclusion of electrostatic complementarity was likely to improve the geometric docking results.

7.2 Hydrophobic complementarity

Another term employed by several groups in protein–protein docking is desolvation or hydrophobic complementarity. Chen and Weng [27] combined desolvation, geometry, and electrostatics in a multiple-grid representation of each molecule. The desolvation term in that study involved calculation of the correlation between two surfaces weighted by desolvation descriptors (derived from non-pairwise atomic contact energies). This formulation rewarded interfaces with buried aliphatic hydrophobic residues and to lesser extent also those with buried aromatic residues. It reflected the entropic effect resulting from the release of water from the interface, and therefore the desolvation term was intermingled with the geometric term, that represents the same effect. Indeed, when the desolvation term was combined with electrostatics and geometric complementarity, Chen and Weng found that the latter needed to be strongly downscaled.

Berchanski et al., by placing a hydrophobic descriptor in the imaginary part of a grid of complex numbers, formulated a hydrophobic complementarity term that was detached from the geometric term [28]. Their hydrophobic complementarity term rewarded only hydrophobic–hydrophobic contacts, thereby measuring the hydrophobic surface that was packed against the hydrophobic surface of the other molecule. The hydrophobic complementarity score was added to the geometric score. Berchanski et al. found that the effect of hydrophobic complementarity in the docking of soluble proteins was generally small, except for antibody–antigen systems, where up weighting of interactions that involved aromatic residues was beneficial. They also found that intersection of solutions from geometric, geometric–electrostatic, and geometric–hydrophobic docking searches considerably improved the ranking of the nearly correct solutions.

Desolvation energy in a post-scan filter was considered by Jackson et al. [29] and by Palma et al. [24]. Neither group provided information about the effect of desolvation alone. Jackson et al. found that calculation of the desolvation energy, combined with local structure refinement, improved the ranks of nearly correct solutions for enzyme–inhibitor systems but not for antibody–antigen systems.

The different formulations of the hydrophobic effect in the studies described above emphasize the relationship between geometric complementarity and desolvation. Thus, the desolvation term of Chen and Weng [27] incorporates the geometric and the hydrophobic complementarity terms of Berchanski et al. [28]. When separated from the geometric term, hydrophobic complementarity appears to be a weak descriptor in the docking of soluble proteins; its role in the construction of oligomers is more important [28].

7.3 Binding site information

Although inclusion of electrostatic and hydrophobic complementarity terms generally improved the results of unbound docking, as discussed above, it was not enough to rank nearly correct solutions near the top. It was clear that either the dominant geometric complementarity term had to be improved, so that the shape modifications that occur upon complex formation could be more effectively tolerated, or additional information about the interaction site should be included, as is commonly done in protein–ligand docking.

Most of the groups that participated in the first docking challenge used binding-site information as a post-scan filter that eliminated false-positive solutions [20,30]. Several groups [13,22,29,31,32] made such a filter an integral part of their prediction procedure. In contrast, Ben-Zeev and Eisenstein [33] formulated an algorithm to incorporate external information from biological, biochemical, and bioinformatics studies in the scan, generating a different set of solutions, which was biased toward solutions in which several specified residues participated in binding (or did not participate, if this was the preferred option). This was done by storing weights in the imaginary part of a complex numbers grid representation, thus up-weighting or down-weighting given contacts in the geometric scan. The weighted-geometric docking procedure was successfully applied in several cases, using information extracted from bioinformatic analyses [34] or from biochemical studies [35].

Inclusion of binding-site information in the scan or as a filter proved to be a useful tool for identifying and up-ranking nearly correct solutions. Interestingly, Ben-Zeev et al. found that their procedure was successful even when definition of the binding site was approximate, i.e. only part of it was identified and up-weighted, or a portion of the weighted surface was incorrectly assigned [33].

7.4 Different shape descriptors

The dominant role of the geometric complementarity term observed in protein–protein docking suggested that modifying the representation of molecules on the grid was likely to improve the docking results. This was particularly important in the absence of external information. Over the years, different modifications of the original algorithm of Katchalski-Katzir et al. have been proposed. Eisenstein et al. used different radii for different atoms or groups of atoms, with Coulombic radii used to represent oxygen and nitrogen atoms and van der Waals radii for CHn groups, and modified the molecular surface to represent a solvent-accessible surface [36]. Chen and Weng presented a symmetrical description of the molecular shapes by using grids of complex numbers in which the imaginary part was used to store the interior of the molecule. This approach allowed different penalties to be imposed for surface–interior and interior–interior clashes [27]. The same purpose was achieved by Palma et al. [24] by employing two grids, the surface grid and the interior grid, to describe each molecule. Notably, these authors correlated the grids using Boolean operations, and were therefore able to employ much smaller grids than those used in FFT-based methods.

Several groups proposed different treatments of the outermost atoms of exposed long side chains, such as lysine and arginine, which are also known to be highly flexible. Gabb et al. [22] and Chen and Weng [27] reported that truncating these side chains worsened the docking results for most systems. Palma et al. [24] allowed unrealistic penetration of flexible side chains in their first docking step, and concluded that such softening did not improve the results. A different treatment was proposed by Heifetz and Eisenstein [37], in which the penalty for interpenetration was retained, but contacts formed by the flexible side chains were not rewarded. Their approach led to a significant reduction in the scores of false-positive solutions and improved the rankings of the nearly correct ones.

Another modification of the geometric representation of the molecules was to weight the grid points according to the number of contributing atoms, thereby rewarding positions that allow formation of more intermolecular contacts. Vakser used this approach in conjunction with low-resolution docking [38]. More recently, Chen and Weng weighted only the surface of the stationary molecule, introducing a pairwise shape complementarity descriptor [39] that favored nearly correct solutions, elevating their rankings.

7.5 The CAPRI experiment

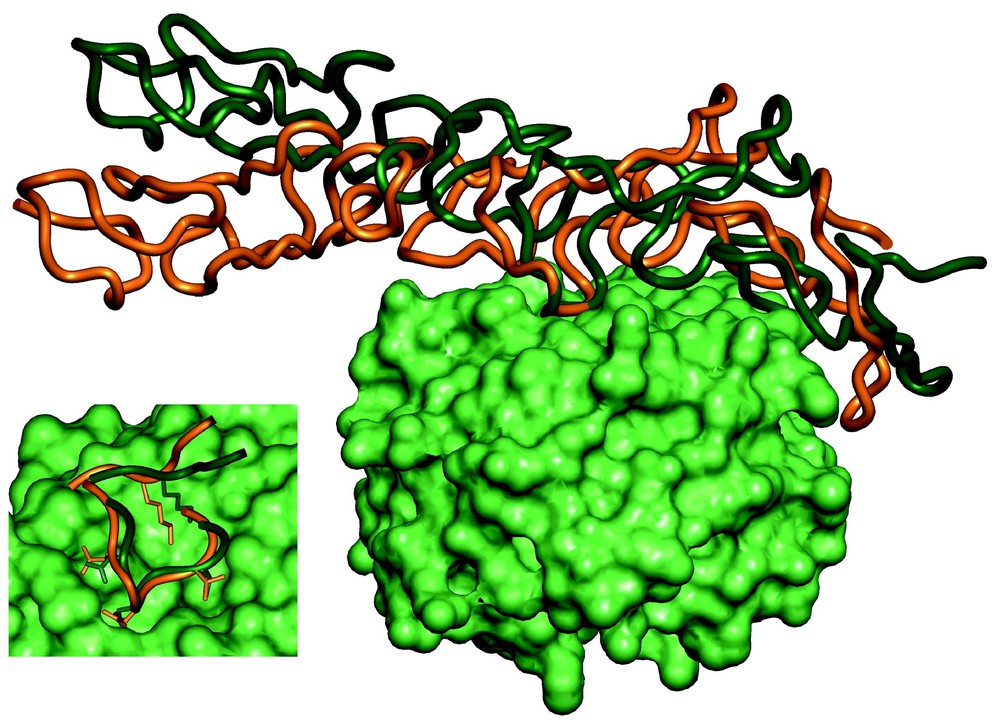

The first docking challenge, described above, was a landmark in the development of docking techniques, and stimulated interest in solving the protein–protein docking problem. This initiative was recently continued with the launching of the CAPRI (Critical Assessment of PRediction of Interactions) experiment. CAPRI is an ongoing blind docking experiment [40], which up to now has included 13 targets. The results of this experiment indicate that docking programs often produce good approximate structures of the target complexes [41]. Fig. 3 presents a superposition of the structure of the complex between the basement membrane proteins nidogen and laminin predicted by the group of M. Eisenstein (Eisenstein M., Ben-Zeev E., Atarot T. and Segal D., unpublished results) on the structure obtained experimentally [42]. The binding site is predicted quite accurately (0.8 Å RMSD), providing detailed information on the intermolecular interactions. Notably, despite the rigid-body approximation, the performance of grid-based and other procedures that treat the molecules as rigid bodies was as good as that of non-rigid-body procedures.

Comparison between the predicted structure of the nidogen–laminin complex, by Eisenstein et al., and the experimental X-ray structure [42]. The nidogen molecules in the predicted and experimental structures were superposed. The surface of nidogen is shown in green. The elongated laminin molecule is shown as a ribbon diagram, orange for the predicted position and dark green for the experimental structure. In the insert we zoom onto the interaction site, showing that despite the deviation between the predicted and observed relative positions of the molecules, most of the binding-site interactions are correctly predicted. Masquer

Comparison between the predicted structure of the nidogen–laminin complex, by Eisenstein et al., and the experimental X-ray structure [42]. The nidogen molecules in the predicted and experimental structures were superposed. The surface of nidogen is shown in green. The ... Lire la suite

8 Where do we go from here?

The development of docking techniques has progressed significantly over the past few years, starting with bound docking, then proceeding to the far more realistic test of unbound docking, and continuing with blind docking challenges, which provide common ground for comparison of the performance of different docking procedures. Geometric complementarity appears to be an essential feature in complex formation and a powerful descriptor even in unbound docking. Clearly, there is still place for development of new docking techniques and improvement of the old ones. In particular, the approximate solutions provided by most docking programs need to be refined and the question of major conformation changes must be addressed.

The available data on sequence, structure, and intermolecular interaction, as well as our view of the activity in a living cell, are now very different from the situation when docking programs started to emerge, and are likely to change continuously. The development of docking programs will inevitably follow this change. It is likely for example, that many of the structures used for docking will be models at different levels of accuracy, and that docking techniques will evolve to meet this new challenge. Also, new questions will be asked: not only ‘How do molecules a and b bind?’, but also ‘Do molecules a and b bind?’ or ‘Do molecules a, b, c, etc. form an assembly, and if so, how?’ Up to now, model structures have been docked in only a few studies. The low-resolution docking procedure of Vakser et al., which was designed to dock low-accuracy structures [43], has in some cases successfully predicted the structures of complexes starting from very approximate molecular models [44]. Berchanski et al. have succeeded in constructing homo-tetramers from model structures of single subunits by combining molecular modeling with docking [45]. Similarly, only a few attempts have been made to combine more than two domains, subunits, or proteins into assemblies via docking [10,36,45–47], and to our knowledge only one group has attempted to distinguish between true and false protein–protein partners [25].

Docking must also be considered within the larger context of biological sciences. During the past few decades many proteins have been detected in the living cell. Since all of them were located within the relatively small cell volume, an extraordinarily large number of protein–protein interactions could be anticipated. New immunological, genetic, and chemical techniques have been used to identify well-characterized protein complexes in yeast, bacteria, and the fruit fly Drosophila. Some of the proteins were found to interact with only a single partner, whereas others interacted specifically with ensembles of selected proteins. The accumulating information on specific protein–protein interactions led to the construction of maps that clearly showed networks of interactions in the living yeast cells [48–50], bacteria [51] and fruit fly [52].

Most of the interacting proteins in a living cell possess characteristic specific biological activities, which can be arrested or enhanced by interactions with other proteins. Activation of a living cell, either by external stimuli (such as environmental changes or binding of biologically active ligands) or by internal stimuli (such as gene order), is expected to trigger cascades of protein–protein interactions that lead to the desired cellular response. The graphic representation of the flow of information, which is an integral part of protein–protein interaction maps, is expected to uncover such cascades, in which each branch represents a specific signal or information transfer event. Some of the interactions in the cascade may be of extremely short duration, possibly facilitated by an intermolecular contact that does not correspond to the lowest free energy complex. Moreover, during the information transfer some of the proteins within the cell may be destroyed and novel proteins synthesized. Hence, although regular small subsets of interactions have been identified within cellular interaction networks [53], allowing the activity of the cell to be viewed in terms of ‘engineering modules’, the whole cellular network is much more complex than the networks familiar to engineers [54].

We would like to conclude by emphasizing that protein–protein networks exist and transfer biological information using the same factors as those determining protein docking. Any attempt to intervene in cellular processes by changing the information flow within the living cell (e.g., by administration of drugs) requires thorough and detailed understanding of the interactions between the molecules. Such understanding can be provided by docking techniques.

Acknowledgements

We acknowledge the essential contribution of Dr. Isaac Shariv to the development of the MolFit algorithm. MolFit can be downloaded from our web site: http://www.weizmann.ac.il/Chemical_Research_Support/molfit/.